Abstract:

Training with larger number of parameters while keeping fast iterations is an increasingly

adopted strategy and trend for developing better performing Deep Neural

Network (DNN) models. This necessitates increased memory footprint and

computational requirements for training. Here we introduce a novel methodology

for training deep neural networks using 8-bit floating point (FP8) numbers.

Reduced bit precision allows for a larger effective memory and increased computational

speed. We name this method Shifted and Squeezed FP8 (S2FP8). We

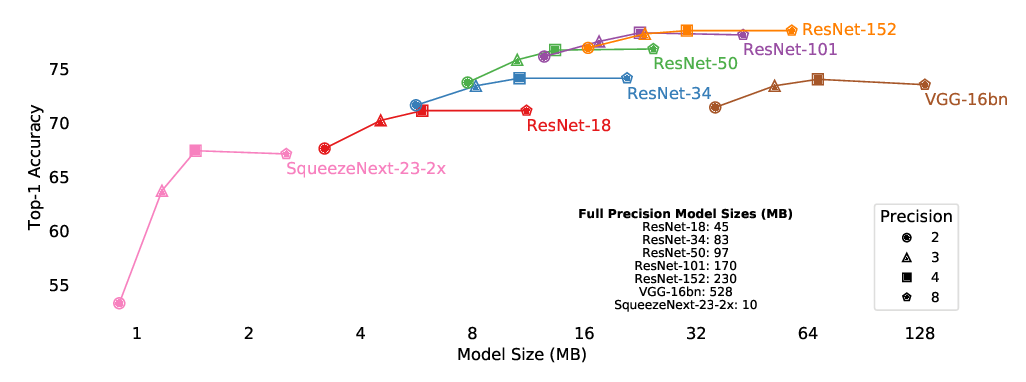

show that, unlike previous 8-bit precision training methods, the proposed method

works out of the box for representative models: ResNet50, Transformer and NCF.

The method can maintain model accuracy without requiring fine-tuning loss scaling

parameters or keeping certain layers in single precision. We introduce two

learnable statistics of the DNN tensors - shifted and squeezed factors that are used

to optimally adjust the range of the tensors in 8-bits, thus minimizing the loss in

information due to quantization.