Abstract:

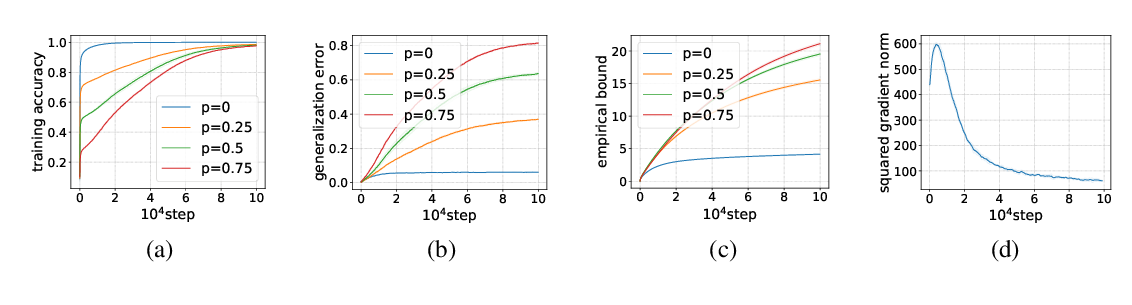

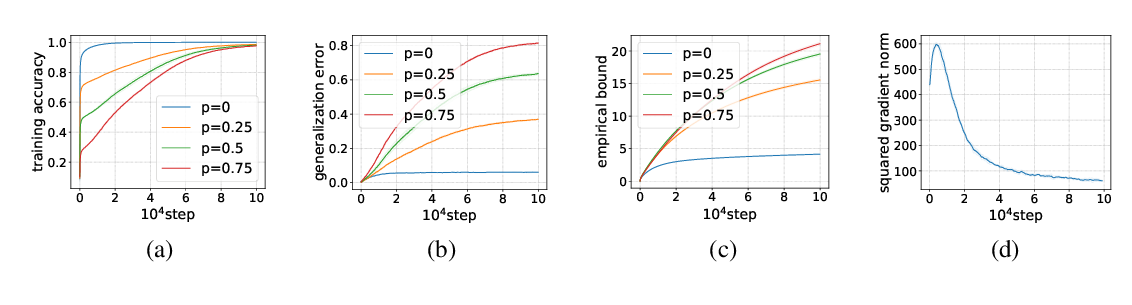

One of the biggest issues in deep learning theory is the generalization ability of networks with huge model size.

The classical learning theory suggests that overparameterized models cause overfitting.

However, practically used large deep models avoid overfitting, which is not well explained by the classical approaches.

To resolve this issue, several attempts have been made.

Among them, the compression based bound is one of the promising approaches.

However, the compression based bound can be applied only to a compressed network, and it is not applicable to the non-compressed original network.

In this paper, we give a unified frame-work that can convert compression based bounds to those for non-compressed original networks.

The bound gives even better rate than the one for the compressed network by improving the bias term.

By establishing the unified frame-work, we can obtain a data dependent generalization error bound which gives a tighter evaluation than the data independent ones.